- Daily Crossword

- Word Puzzle

- Word Finder

- Word of the Day

- Synonym of the Day

- Word of the Year

- Language stories

- All featured

- Gender and sexuality

- All pop culture

- Writing hub

- Grammar essentials

- Commonly confused

- All writing tips

- Pop culture

- Writing tips

Advertisement

- exploratory

adjective as in preliminary

Strongest matches

- preparatory

Strong matches

- fundamental

Weak matches

adjective as in prolegomenous

- introductory

adjective as in searching

- penetrating

- experimental

- fact-finding

- inquisitive

adjective as in trial

Strongest match

- preliminary

- probationary

- provisional

Example Sentences

The following bits of journalism are more exploratory than hard-hitting.

Campa-Najjar, who had formed an exploratory committee, announced earlier this week that he believed the district should be represented by a woman of color after also releasing polling showing he was the leading Democrat in the race.

This is more of an exploratory technique, so test yourself and try finding what feels good to you.

Former San Diego Mayor Kevin Faulconer took the next stop in his long, drawn-out flirtation with a run for governor of California by announcing Monday that he’s formed an exploratory committee for a bid.

Essentially, they think an ancestral archaeon began reaching into the world around it and associating with bacteria through these exploratory blebs of membrane.

Unlike former Florida Gov. Jeb Bush and Sen. Rand Paul of Kentucky, Huckabee is not immediately forming an exploratory committee.

Rand Paul announced he would form an exploratory committee for a Senate campaign at just the right time, in May 2009.

Gorgeous gave them an opportunity to take on a serious subject in a fun, exploratory way, McGill says.

It is not an efficient way to write, but I love that exploratory phase, when even bad writing somehow seems promising.

In February, he formed an exploratory committee for his congressional bid.

From this exploratory trip, the boats returned to their newly named harbour of Brest, on the 13th.

It is the last book to which he should return at the close of his exploratory voyages.

In the latter case paring becomes necessary as an exploratory means to diagnosis.

The thinking of things out carefully over a second and third cup of coffee, cautious self exploratory reasoning.

This was our seventh exploratory trip after our sixth landing since entering the field of the sun Ponthis.

Related Words

Words related to exploratory are not direct synonyms, but are associated with the word exploratory . Browse related words to learn more about word associations.

adjective as in introductory, initial

adjective as in probing

adjective as in experimental

From Roget's 21st Century Thesaurus, Third Edition Copyright © 2013 by the Philip Lief Group.

Essay Papers Writing Online

Mastering the art of writing an exploratory essay – a comprehensive guide for success.

Exploratory essays are a unique form of writing that allows the writer to explore a topic without coming to a definitive conclusion. Instead, the goal is to delve into the subject, gather information from various sources, and present a balanced analysis of different perspectives. This type of essay is ideal for examining complex issues or controversial topics where there may be no clear-cut answer.

When writing an exploratory essay, it’s essential to maintain an open mind and approach the topic with a sense of curiosity. Rather than arguing a specific point, the writer should focus on presenting a variety of viewpoints and exploring the nuances of the issue. This can lead to a richer understanding of the topic and encourage critical thinking.

In this guide, we will provide you with tips and examples to help you craft a compelling exploratory essay. From brainstorming ideas to structuring your essay and incorporating research, we will walk you through the key steps to creating a thought-provoking piece of writing that engages readers and encourages them to consider multiple perspectives.

Tips for Crafting an Exploratory Essay

1. Choose a compelling topic that sparks curiosity and invites exploration.

2. Conduct thorough research to gather diverse perspectives and sources on the topic.

3. Clearly define the problem or question you intend to explore in your essay.

4. Structure your essay with an introduction, body paragraphs presenting different viewpoints, and a conclusion.

5. Use a neutral tone and aim to present various perspectives without bias.

6. Engage with the sources you’ve collected and analyze them critically.

7. Include personal insights and reflections based on the research conducted.

8. Provide a summary of the key points and conclusions drawn from the exploration.

9. Take the time to revise and edit your essay to ensure clarity and coherence.

10. Embrace the process of exploration and discovery throughout your writing journey.

Understand the Purpose

An exploratory essay is a unique type of academic writing that allows writers to delve into a topic without forming a definite thesis statement. The purpose of an exploratory essay is to explore a topic, gather information, and present different perspectives on the issue. It is a journey of discovery where writers can explore ideas, consider different viewpoints, and ultimately come to a deeper understanding of the topic.

When writing an exploratory essay, keep in mind that the goal is not to argue a particular point of view or persuade the reader to agree with your opinion. Instead, focus on presenting a variety of perspectives and viewpoints, giving your readers a comprehensive understanding of the topic. By approaching the topic with an open mind and a willingness to explore different ideas, you can create a rich and engaging exploratory essay that encourages critical thinking and thoughtful reflection.

Choose an Intriguing Topic

Choosing a compelling topic is crucial when writing an exploratory essay. Look for subjects that spark your curiosity and offer multiple perspectives to explore. Consider current events, controversial issues, or emerging trends that are relevant to your interests or field of study. A thought-provoking topic will not only captivate your readers but also inspire in-depth research and critical analysis.

Conduct Thorough Research

Research is a crucial part of writing an exploratory essay. It is essential to gather as much relevant information as possible to form a comprehensive understanding of the topic. Begin by exploring various sources such as books, journals, articles, and online resources. Take notes of key points, interesting facts, and different perspectives on the subject.

Create a detailed outline of the main ideas and arguments you want to address in your essay. Organize your research findings in a systematic manner to ensure that you have all the necessary information to support your arguments. Consider different viewpoints and opinions to present a well-rounded discussion in your exploratory essay.

| Tip: | Use reputable sources and make sure to cite them properly to avoid plagiarism. |

Organize Your Ideas Effectively

When writing an exploratory essay, it’s essential to organize your ideas effectively to ensure clarity and coherence in your writing. One effective way to do this is by creating an outline before you begin writing. An outline will help you structure your thoughts and arrange them in a logical order.

Another helpful tip is to group related ideas together. This will make it easier for your readers to follow your argument and understand your perspective. You can use headings and subheadings to categorize your ideas and make your essay more organized.

Additionally, using transitional words and phrases can help you connect your ideas smoothly and guide your readers through your essay. Words like “however,” “therefore,” and “in conclusion” can signal shifts in your argument and keep your readers engaged.

Remember to review and revise your essay after writing it to ensure that your ideas flow cohesively and support your thesis statement. By organizing your ideas effectively, you can create a compelling exploratory essay that engages your readers and explores complex topics in-depth.

Develop a Strong Thesis Statement

One of the most crucial aspects of writing an exploratory essay is developing a strong thesis statement. Your thesis statement is the main idea or argument that you will explore and support throughout your essay. It should be clear, concise, and specific, providing a roadmap for your readers to understand the purpose of your exploration.

When crafting your thesis statement, consider the following tips:

| Clearly define the topic or issue you will be exploring in your essay. Avoid vague or broad statements that can confuse readers. | |

| Express your position or opinion on the topic. Your thesis statement should indicate your perspective on the issue. | |

| Explain why the topic is significant or why it is worth exploring. Give your readers a reason to care about the issue you are discussing. | |

| Your thesis statement should not be a statement of fact but rather a claim that can be debated or challenged. This will stimulate discussion and analysis in your essay. |

By following these tips, you can develop a strong thesis statement that sets the tone for your exploratory essay and guides your readers through your exploration of the topic.

Use a Clear Structure

When writing an exploratory essay, it’s important to use a clear structure that guides your readers through your exploration of a topic. A well-organized essay will help you present your ideas in a logical and easy-to-follow manner. Here are some tips to help you create a clear structure for your exploratory essay:

- Introduction: Start your essay with an introduction that provides background information on the topic you’ll be exploring. Clearly state your research question or thesis statement to give readers an idea of what to expect.

- Body Paragraphs: Divide the body of your essay into several paragraphs, each focusing on a different aspect of the topic. Use topic sentences to introduce each paragraph and provide supporting evidence or examples to back up your points.

- Exploration Stage: In the exploration stage, consider different perspectives, arguments, and viewpoints on the topic. Be open-minded and present a balanced view of the issue.

- Conclusion: Wrap up your essay with a conclusion that summarizes your findings and key points. Reflect on what you’ve learned through the exploration process and suggest possible next steps or areas for further research.

By using a clear structure in your exploratory essay, you’ll make it easier for your readers to follow your thoughts and engage with your ideas. Remember to also use transitions between paragraphs to ensure a smooth flow of information throughout your essay.

Provide Compelling Examples

One of the most effective ways to enhance the quality of your exploratory essay is by including compelling examples. Examples help to illustrate your points and make them more relatable to readers. They can be drawn from real-life situations, historical events, personal experiences, or even hypothetical scenarios.

When selecting examples for your essay, make sure they are relevant to the topic and help to clarify your argument. Avoid using examples that are too clichéd or overused, as they may not have the desired impact on your audience.

Additionally, it’s important to provide a variety of examples to support different aspects of your exploration. This will demonstrate your depth of research and understanding of the topic. Use a mix of specific and general examples to create a well-rounded argument that is both informative and engaging.

Remember to properly introduce and explain each example to ensure that your readers can follow your line of reasoning. By including compelling examples in your exploratory essay, you can effectively enhance the persuasiveness and credibility of your arguments.

Related Post

How to master the art of writing expository essays and captivate your audience, convenient and reliable source to purchase college essays online, step-by-step guide to crafting a powerful literary analysis essay, unlock success with a comprehensive business research paper example guide, unlock your writing potential with writers college – transform your passion into profession, “unlocking the secrets of academic success – navigating the world of research papers in college”, master the art of sociological expression – elevate your writing skills in sociology.

BibGuru Blog

Be more productive in school

- Citation Styles

How to write an exploratory essay [Updated 2023]

Unlike other types of essays, the exploratory essay does not present a specific argument or support a claim with evidence. Instead, an exploratory essay allows a writer to "explore" a topic and consider tentative conclusions about it. This article covers what you need to know to write a successful exploratory essay.

What is an exploratory essay?

An exploratory essay considers a topic or problem and explores possible solutions. This type of paper also sometimes includes background about how you have approached the topic, as well as information about your research process.

Whereas other types of essays take a concrete stance on an issue and offer extensive support for that stance, the exploratory essay covers how you arrived at an idea and what research materials and methods you used to explore it.

For example, an argumentative essay on expanding public transportation might argue that increasing public transit options improves citizens' quality of life. However, an exploratory essay would provide context for the issue and discuss what data and research you gathered to consider the problem.

What to include in an exploratory essay

Importantly, an exploratory essay does not reach a specific conclusion about a topic. Rather, it explores multiple conclusions and possibilities. So, for the above example, your exploratory essay might include several viewpoints about public transit, including research from urban planners, transportation advocates, and other experts.

Finally, an exploratory essay will include some reflection on your own research and writing process. You might be asked to draw some conclusions about how you could tackle your topic in an argumentative essay or you might reflect on what sources or pieces of evidence were most helpful as you were exploring the topic.

Ultimately, the primary goal of an exploratory essay is to make an inquiry about a topic or problem, investigate the context, and address possible solutions.

What to expect in an exploratory essay assignment

This section discusses what you can expect in an exploratory essay assignment, in terms of length, style, and sources. Instructors may also provide you with an exploratory essay example or an assignment rubric to help you determine if your essay meets the appropriate guidelines .

The expected length of an exploratory essay varies depending on the topic, course subject, and course level. For instance, an exploratory essay assigned in an upper-level sociology course will likely be longer than a similar assignment in an introductory course.

Like other essay types, exploratory essays typically include at least five paragraphs, but most range from a few pages to the length of a full research paper .

While exploratory essays will generally follow academic style guidelines, they differ from other essays because they tend to utilize a more reflective, personal tone. This doesn't mean that you can cast off academic style rules, however.

Rather, think of an exploratory essay as a venue for presenting your topic and methods to a sympathetic and intelligent audience of fellow researchers. Most importantly, make sure that your writing is clear, correct, and concise.

As an exploration of your approach to a topic, an exploratory essay will necessarily incorporate research material. As a result, you should expect to include a bibliography or references page with your essay. This page will list both the sources that you cite in your essay, as well as any sources that you may have consulted during your research process.

The citation style of your essay's bibliography will vary based on the subject of the course. For example, an exploratory essay for a sociology class will probably adhere to APA style , while an essay in a history class might use Chicago style .

Exploratory essay outline and format

An exploratory essay utilizes the same basic structure that you'll find in other essays. It includes an introduction, body paragraphs, and a conclusion. The introduction sets up the context for your topic, addresses why that topic is worthy of study, and states your primary research question(s).

The body paragraphs cover the research that you've conducted and often include overviews of the sources that you've consulted. The conclusion returns to your research question and considers possible solutions.

- Introduction

The introduction of an exploratory essay functions as an overview. In this section, you should provide context for your topic, explain why the topic is important, and state your research question:

- Context includes general information about the topic. This part of the introduction may also outline, or signpost, what the rest of the paper will cover.

- Topic importance helps readers "buy in" to your research. A few sentences that address the question, "so what?" will enable you to situate your research within an ongoing debate.

- The last part of of your introduction should clearly state your research question. It's okay to have more than one, depending on the assignment.

If you were writing an exploratory essay on public transportation, you might start by briefly introducing the recent history of public transit debates. Next, you could explain that public transportation research is important because it has a concrete impact on our daily lives. Finally, you might end your introduction by articulating your primary research questions.

While some individuals may choose not to utilize public transportation, decisions to expand or alter public transit systems affect the lives of all. As a result of my preliminary research, I became interested in exploring whether public transportation systems improve citizens' quality of life. In particular, does public transit only improve conditions for those who regularly use these systems? Or, do improvements in public transportation positively impact the quality of life for all individuals within a given city or region? The remainder of this essay explores the research around these questions and considers some possible conclusions.

Body paragraphs

The body paragraphs of an exploratory essay discuss the research process that you used to explore your topic. This section highlights the sources that you found most useful and explains why they are important to the debate.

You might also use the body paragraphs to address how individual resources changed your thinking about your topic. Most exploratory essays will have several body paragraphs.

One source that was especially useful to my research was a 2016 study by Richard J. Lee and Ipek N. Sener that considers the intersections between transportation planning and quality of life . They argue that, while planners have consistently addressed physical health and well-being in transportation plans, they have not necessarily factored in how mental and social health contributes to quality of life. Put differently, transportation planning has traditionally utilized a limited definition of quality of life and this has necessarily impacted data on the relationship between public transit and quality of life. This resource helped me to broaden my conception of quality of life to include all aspects of human health. It also enabled me to better understand the stakeholders involved in transportation decisions.

Your conclusion should return to the research question stated in your introduction. What are some possible solutions to your questions, based on the sources that you highlighted in your essay? While you shouldn't include new information in your conclusion, you can discuss additional questions that arose as you were conducting your research.

In my introduction, I asked whether public transit improves quality of life for all, not simply for users of public transportation. My research demonstrates that there are strong connections between public transportation and quality of life, but that researchers differ as to how quality of life is defined. Many conclude that public transit improves citizens' lives, but it is still not clear how public transit decisions affect non-users, since few studies have focused on this distinct group. As a result, I believe that more research is needed to answer the research questions that I posed above.

Frequently Asked Questions about exploratory essays

You should begin an exploratory essay by introducing the context for your topic, explaining the topic's importance, and outlining your original research question.

Like other types of essays, the exploratory essay has three primary parts:

Although an exploratory essay does not make a specific argument, your research question technically serves as your thesis.

Yes, you can use "I" throughout your paper. An exploratory essay is meant to explore your own research process, so a first-person perspective is appropriate.

You should end your exploratory essay with a succinct conclusion that returns to your research question and considers possible answers. You can also end by highlighting further questions you may have about your research.

Make your life easier with our productivity and writing resources.

For students and teachers.

exploratory essay

Glossary of Grammatical and Rhetorical Terms

- An Introduction to Punctuation

- Ph.D., Rhetoric and English, University of Georgia

- M.A., Modern English and American Literature, University of Leicester

- B.A., English, State University of New York

An exploratory essay is a short work of nonfiction in which a writer works through a problem or examines an idea or experience, without necessarily attempting to back up a claim or support a thesis . In the tradition of the Essays of Montaigne (1533-1592), an exploratory essay tends to be speculative, ruminative, and digressive.

William Zeiger has characterized the exploratory essay as open : "[I]t is easy to see that expository composition —writing whose great virtue is to confine the reader to a single, unambiguous line of thought—is closed , in the sense of permitting, ideally, only one valid interpretation. An 'exploratory' essay, on the other hand, is an open work of nonfiction prose . It cultivates ambiguity and complexity to allow more than one reading or response to the work." ("The Exploratory Essay: Enfranchising the Spririt of Enquiry in College Composition." College English , 1985)

Examples of Exploratory Essays

Here are some exploratory essays by famous authors:

- "The Battle of the Ants," by Henry David Thoreau

- "How It Feels to Be Colored Me," by Zora Neale Hurston

- " Naturalization," by Charles Dudley Warner

- "New Year's Eve," by Charles Lamb

- "Street Haunting: A London Adventure," by Virginia Woolf

Examples and Observations:

- "The expository essay tries to prove all of its contentions, while the exploratory essay prefers to probe connections. Exploring links between personal life, cultural patterns, and the natural world, this essay leaves space for readers to reflect on their own experience, and invites them into a conversation..." (James J. Farrell, The Nature of College . Milkweed, 2010)

- "I have in mind a student writing whose model is Montaigne or Byron or DeQuincey or Kenneth Burke or Tom Wolfe...The writing is informed by associational thinking, a repertory of harlequin changes, by the resolution that resolution itself is anathema. This writer writes to see what happens." (William A. Covino, The Art of Wondering: A Revisionist Return to the History of Rhetoric . Boynton/Cook, 1988)

Montaigne on the Origin of the Essays

"Recently I retired to my estates, determined to devote myself as far as I could to spending what little life I have left quietly and privately; it seemed to me then that the greatest favour I could do for my mind was to leave it in total idleness, caring for itself, concerned only with itself, calmly thinking of itself. I hoped it could do that more easily from then on since with the passage of time it had grown mature and put on weight. "But I find—

Variam semper dant otia mentis [Idleness always produces fickle changes of mind]*

—that, on the contrary, it bolted off like a runaway horse, taking far more trouble over itself than it ever did over anyone else; it gives birth to so many chimeras and fantastic monstrosities, one after another, without order or fitness, that, so as to contemplate at my ease their oddness and their strangeness, I began to keep a record of them, hoping in time to make my mind ashamed of itself." (Michel de Montaigne, "On Idleness." The Complete Essays , trans. by M.A. Screech. Penguin, 1991)

*Note: Montaigne's terms are the technical ones of melancholy madness.

Characteristics of the Exploratory Essay

"In the quotation from Montaigne [above], we have several of the characteristics of the exploratory essay : First, it is personal in subject matter , finding its topic in a subject that is of deep interest to the writer. Second, it is personal in approach , revealing aspects of the writer as the subject at hand illuminates them. The justification for this personal approach rests in part on the assumption that all people are similar; Montaigne implies that, if we look honestly and deeply into any person, we will find truths appropriate to all people. Each of us is humankind in miniature. Third, notice the extended use of figurative language (in this case the simile comparing his mind to a runaway horse). Such language is also characteristic of the exploratory essay." (Steven M. Strang, Writing Exploratory Essays: From Personal to Persuasive . McGraw-Hill, 1995)

- Free Modifiers: Definition, Usage, and Examples

- Definition and Examples of Formal Essays

- Definition and Examples of Science Writing

- Periodical Essay Definition and Examples

- Learn How to Use Extended Definitions in Essays and Speeches

- Writer Purpose in Rhetoric and Composition

- Definition and Examples of Humorous Essays

- Definition Examples of Collage Essays

- What Is a Compound Verb?

- Conclusion in Compositions

- What is Classification in Grammar?

- What Is a Personal Essay (Personal Statement)?

- Topic In Composition and Speech

- Understanding General-to-Specific Order in Composition

- What Are the Different Types and Characteristics of Essays?

- Definition and Examples of Paragraphing in Essays

- Essay Guides

- Main Academic Essays

- Exploratory Essay: Definition, Outline & Examples

- Speech Topics

- Basics of Essay Writing

- Essay Topics

- Other Essays

- Research Paper Topics

- Basics of Research Paper Writing

- Miscellaneous

- Chicago/ Turabian

- Data & Statistics

- Methodology

- Admission Writing Tips

- Admission Advice

- Other Guides

- Student Life

- Studying Tips

- Understanding Plagiarism

- Academic Writing Tips

- Basics of Dissertation & Thesis Writing

- Research Paper Guides

- Formatting Guides

- Basics of Research Process

- Admission Guides

- Dissertation & Thesis Guides

Exploratory Essay: Definition, Outline & Examples

Table of contents

Use our free Readability checker

You may probably ask yourself a question like, “What is exploratory writing?” An exploratory essay displays people's shared understanding of a subject while laying forth their points of view on it. You must reflect deeply to write a fantastic essay. Although proof of answers is not required for an exploratory essay, various rules must be followed. Also, a plethora of topics can be investigated for exploratory essays, including business, education, psychology, etc. They can be anything from a lengthy research report to a brief paper. In this article, we will discuss exploratory essay samples, outline, format, and tips on how to write this paper.

What Is an Exploratory Essay: Definition

An exploratory essay is defined as a writing type where the author examines potential solutions to an issue. Simply put, it provides an overview of issues and draws some early judgments about the proposed solution. Exploratory essays allow you to fully comprehend a subject without worrying about being right or wrong. Your principal objective when preparing this essay is to undertake a thorough investigation first, then think about and assess a few distinct viewpoints on a specific topic. These papers are used in commercial settings and colleges. Studying a minimum of three points helps you grasp the problem correctly in finding the best answer. Exploration essays aim to investigate the subject critically and then offer your readers the research results. Based on its objective, it can take many forms. For instance, a sociology review of literature is an illustration of an exploratory essay.

Peculiarities of Exploratory Writing

Exploratory essay writing is distinct from other types of essays. You will be writing to learn about an issue and find a solution to the matter presented. An exploratory paper is a type of writing that discusses how you arrive at an idea. It also sheds some light on approaches used to examine it, for instance, convincing readers of your standpoint. Conversely, an exploratory paper begins without a firm perspective. While you might support one idea above others, you will not conclude the paper with a clear solution. Instead, it considers a range of findings and alternatives you must know.

Exploratory Essay Outline

We will discuss how crucial it is to generate an outline for exploratory essay. Many overlook this stage because they want to finish writing quickly. Avoid making this error since it causes huge problems. You are ready to start as soon as you design an exploratory essay outline example. The entire writing procedure can be streamlined simply because you already understand the objectives and where to place your quotations. In this context, outlines also give you a perspective on the next steps to take and divide your work into manageable parts. These are helpful for those who detest spending a significant amount of time thinking and writing as they can get more detail on key areas and then pause. Lastly, creating an outline will spare you time since your essay will be straightforward, accurate, and uncomplicated. Your lecturer may value the care placed into your work arrangement. The exploratory essay outline format is as follows:

The opening section (introduction and thesis statement)

- Present your subject and its significance.

- Provide background information.

- State a thesis statement.

Body paragraph 1

- Explain your source, including the author and publication date.

Body paragraph 2

- Discuss crucial information about your issue from the source.

- Explain why the information is valuable.

Body paragraph 3

- Write a reflection on how the material benefited you, changed the way you thought about the issue, or even did not meet your goals.

Last section (conclusion)

- Reintroduce your topic.

- Outline some potential solutions.

How to Write an Exploratory Essay

Preparing an exploratory essay assignment is simple and perceived differently from other essays. Here are five steps on how to write exploratory essay.

Step 1: Select a topic

Give prefenrence to contentious exploratory essay topics with various opposing viewpoints that can be investigated. Picking a subject with insufficient evidence or one that merely offers a "for" or "against" argument is not recommended. Begin with a more general topic. An example of a good topic would be "Impact of digital platforms on family and group values."

Step 2: Select three points

Choose a minimum of three viewpoints in the body paragraphs to examine in your essay after researching the subject. When undertaking research, consider several ideas related to the topic and pinpoint arguments for or against it. Note that an exploration essay is different from a persuasive essay. An exploration essay seeks to find and analyze numerous points of view on a particular topic. This contrasts with outlining the different sides of a topic and supporting one aspect over another.

Step 3: Decide on the readers

Decide on your target audience and the restrictions you might encounter during this process. These may encompass anything that reveals why specific attributes, ideas, and situations lead people to uphold their views.

Step 4: Answer questions

Respond to the inquiries raised by the research body. Determine how to address any concerns the work may raise.

Step 5: Commence writing

Finally, begin writing and use a sample exploratory essay for reference. In addition, make sure that you adhere to the steps discussed above to make your exploratory essay epic.

Exploratory Essay Introduction

Understanding how to start off an explanatory essay is vital before writing. The exploratory essay introduction paragraph lays background information on your issue and its significance. It also outlines the study questions. This section could summarize aspects covered in the remaining part of your essay and the importance of the topic, which inspires readers to trust your study. Do this by including a few phrases that answer the "so what?" query. To increase your readers' interest and engross them, begin your introduction with an attention grabber. This may be a claim predicated on your assessments of a situation or anything that would make clear why the issue is important to you. Expand on the hook by addressing some underlying causal factors of the issue if your theme permits it. Your main claim should be explicitly stated in the final section of your introduction.

Exploratory Essay Thesis Statement

The essay's central idea needs to be briefly summarized in the exploratory essay thesis statement. Use it in explaining its significance among viewers. Mapping out your facts is crucial when creating an effective thesis. In an exploration essay, you may only raise and explore questions about a subject. Choose the principal subject for your paper, which should be related to the thesis. There are numerous exploratory essay thesis examples. Assuming your topic is "How Can Technology be Improved in Schools?" The exploratory thesis statement would be:

Technology advancements significantly impact how individuals are educated and their quality of life. From calculators, which made math simple, to text editors, which revolutionized how research papers are produced and delivered. Yet, more advanced technology should be adopted to increase interactivity and improve overall school appointment.

Exploratory Essay Body

Exploratory essay structure also encompasses the body. An overriding aim of exploratory essay body paragraph is to offer facts and arguments in favor of your thesis. Begin by first explaining why you opted to pursue the initial idea that came to your mind. Next, discuss the form of materials you used and data you gleaned from them. Explore the value of researched data depending on the subject matter and present your exposition. Describe how the answer and resources you used changed your perspective on the issue. If possible, explain how it pointed you in a different path. After that, use a transition to help readers move from one section to the next. For all the following points and references, maintain this fundamental format. The second section of the body complements the initial section by providing space for expressing other points of view. It seeks to justify why people see things from their own perspective.

Exploratory Essay Conclusion

Conclude an exploratory essay by condensing the research questions, suggested solutions, and views you picked up along the way. First, reaffirm your thesis statement. This strengthens your argument and draws the reader's focus directly to the central idea. Further, summarize three supporting arguments you discussed in the body and briefly recap their relevance. This will demonstrate that you have convincingly supported your exploratory thesis, strengthening your work. Your ending must not contain brand-new information, but you can discuss new issues as you study. You may also share your thoughts and why more research should be conducted. It is a great idea to conclude by urging your viewers to take action.

Exploratory Essay Format

There are global formatting guidelines that are involved in producing high-quality papers. These standards have varied characteristics and adhere to guidelines by major corporations. To format an exploratory essay, you should utilize APA, Chicago, or MLA styles. The primary goal of these formatting styles is to disseminate knowledge to the public in the most effective manner possible. For APA, maintain uniform margins on the page's right, left, bottom, and top. Utilize it for Education, Psychology, and Sciences. Including an abstract in an example of an exploratory essay is one component of APA. MLA format requires you to leave one-inch margins across the paper. It is used for disciplines like Humanities . Each paragraph's initial word should be indented. Chicago style is widely utilized in the disciplines of History and Art. The texts should be double-spaced, and paragraphs indented by 0.5 inches.

Exploratory Essay Examples

To make you comprehend the topic better, we have prepared one of the great exploratory essay examples that will give you an overall idea of what we have discussed in this article. Feel free to download the available materials or use a short exploratory essay sample attached below as a source for inspiration. We hope this reference will help you organize your thoughts, give direction for research, and create an outstanding essay.

Liked examples? Did you know that you can place an essay order at StudyCrumb and get a custom paper written according to your instructions? All you need is to provide us with your requirements.

Exploratory Writing Proofreading Checklist

A checklist helps learners comprehend the abilities required for preparing essays. It demonstrates an easy method for a hesitant writer to incorporate the essential components. Below is a simple checklist used in preparing an exploratory draft.

- checkbox Is the opening sentence intriguing? Does it encourage you to read more?

- checkbox Does the transition between the body paragraphs make sense?

- checkbox Does language used, paper length, and sentence arrangement require any changes?

- checkbox Is the title of the essay fascinating and relevant?

- checkbox Have you used enough sources to underpin your arguments?

- checkbox Do you follow the design suggestions given by your professor (such as MLA, Chicago, and APA paper format )?

Extra Tips on Writing an Exploratory Paper

Writing an exploratory paper is not a walk in the park. You may require specific valuable tips to get started. For instance, consider the paper's length, style, and source to use. Here are a few extra tips to get started.

- Conduct detailed research on the topic Whatever your intentions for writing an exploratory paper are, research is crucial. Online resources have a ton of written information. Check out a variety of sources and choose those that are most pertinent to your work.

- Begin early Stop delaying your task. You have a limited amount of time to complete all the appropriate steps. So, get a head start to have enough time to produce a good paper.

- Design an outline Creating an outline is crucial since it ensures you cover all information that needs to be included in your exploratory writing.

- Take notes At the conclusion of your research, you will need to review your notes. Reorganize points logically and keep in mind that all claims must have insightful transitions. This will guarantee easy material flow and ensure your work is more understandable.

- Proofread your work It is okay to make errors in your work, hence, give yourself enough time to edit your paper. Check that you have done it correctly and it is coherent. Furthermore, believe in your abilities to make it work.

Final Thoughts on Exploration Essay

Exploratory essays provide a deeper understanding of an issue. They explain its causative factors, effects on readers, and potential solutions. To put it differently, an exploratory essay sheds some light on the subject and examines initial findings. Notably, learners ought to comprehend how to write an exploratory paper. Do not hesitate to read through exploratory writing examples. You must delve as deeply as you can into the issue. Nevertheless, it would help if you did not try to influence the audience to engage with your viewpoint. The key is to impartially convey a fact and response so that readers can understand what the paper entails.

Delegate this tedious task to professionals. Our college essay writers have extensive experience in preparing high-quality papers on a range of topics. Leave your instructions and have an essay done by one of our experts.

FAQ About Exploratory Essays

1. what is the purpose of an exploratory essay.

An exploratory essay asks you to consider and respond to various ideas instead of a single opinion. It is more concerned with a subject matter instead of a thesis, implying that the paper keeps track of your research activities and subsequent thoughts. The essay answers questions on specific content and rhetorical queries about potential solutions to the situation.

2. What is the difference between an exploratory essay and argumentative essay?

Argument papers look into a particular perspective, while exploratory essays clarify several points. Some self-reflection on your writing process may be included in the exploratory essay. In an argumentative essay, you may be requested to formulate findings on how to approach your subject. You may also consider proofs and links that were useful during the research investigation.

4. Can I use the first person in an exploratory essay?

A first-person viewpoint is acceptable because an exploratory essay should discuss your research procedure. As a basic rule, utilizing first-person pronouns is critical. When writing an exploratory essay, it makes the article less professional than a third-person pronoun. This makes the work more neutral in style. Exploratory writing demands subjective judgments; thus, a first-person tone is ideal.

3. How to start an exploratory essay?

To begin an exploratory essay, you need to introduce the background of your issue. Additionally, discuss its significance, and lay out your primary research query. This academic work is largely based on reliable information. Be careful to take down all the pertinent specifics from your sources and incorporate them as supporting materials in your work.

Daniel Howard is an Essay Writing guru. He helps students create essays that will strike a chord with the readers.

You may also like

Exploratory Essay: The A to Z Guide

01 November, 2020

14 minutes read

Author: Kate Smith

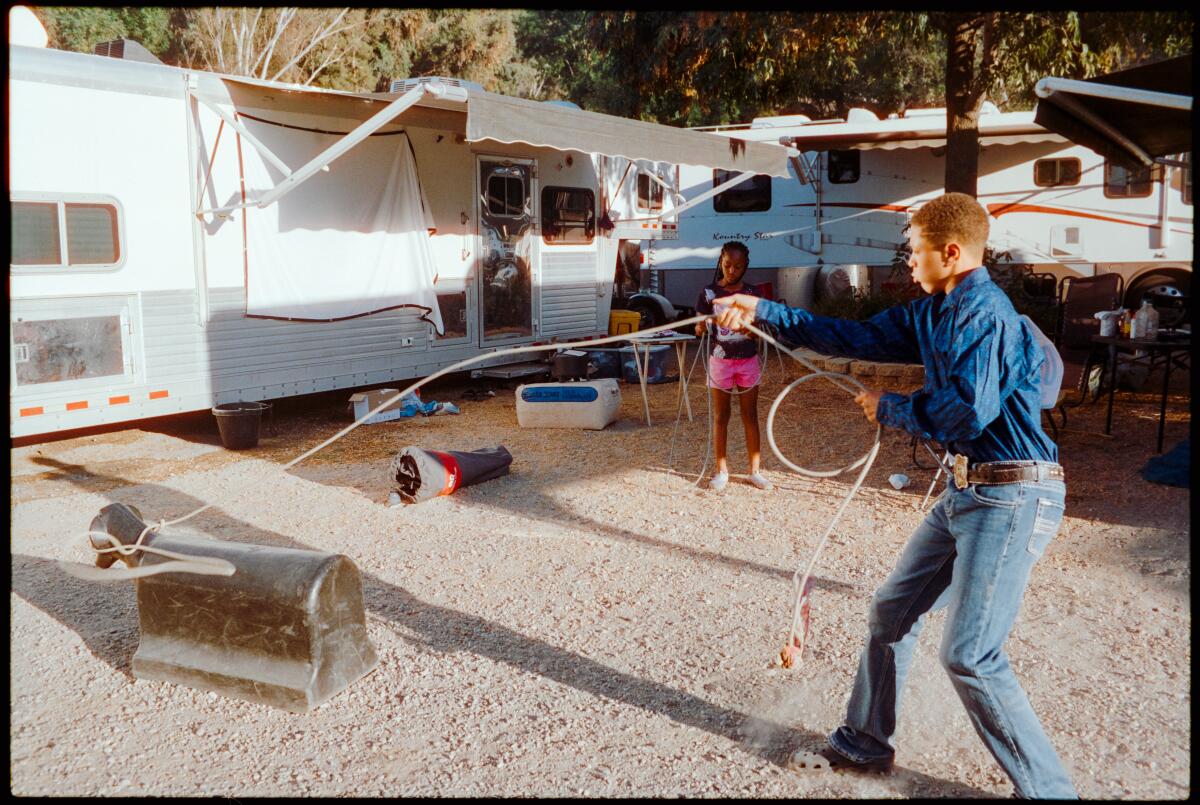

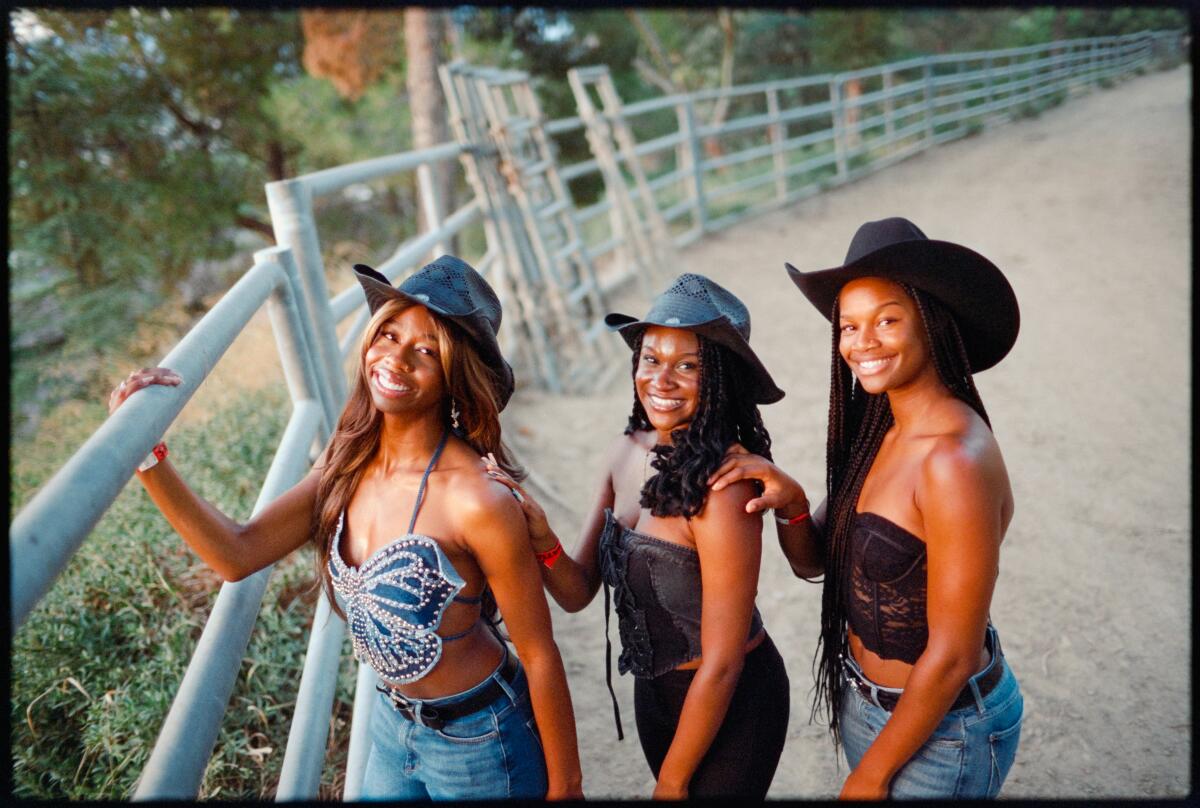

An exploratory essay, the most fun-filled essay writing, differs from every other essay. Here, there is no need for additional research; instead, you explore your ideas and the ideas of others. An exploratory essay entails speculation, digression, and rumination.

Unlike other forms of writing, this essay does not try to impose its thesis on its reader. To write an excellent exploratory essay, you would need to stick to its outline, thinking deeply about the topic. This article will help you understand an exploratory essay definition, and walk you through the building blocks to writing an outstanding exploratory essay.

What is an Exploratory Essay?

An exploratory essay, also called an investigative piece, is a speculatively written essay. Here, the writer peruses over the various overviews of people regarding an issue or a topic. Afterward, they walk through the problem, and air their view without refuting or drawing a biased conclusion.

This type of essay tables the different perspectives of people on a topic from a neutral angle, and at the same time, highlights their mutual understanding. To deliver a great essay, you need to think introspectively about the topic and not delve into idle speculation. An exploratory essay requires that you first reflect on the subject of discussion.

In real cases, sentiments may cloud your judgment, especially when you’re very passionate about the topic. You’re most likely to rush to a conclusion or try to influence others’ opinions without hearing their takes on the matter. An exploratory essay sets to beat this fashion.

It is advisable to consider the rationale of at least three persons on a topic, an event, or an issue as you can make a thorough presentation with detailed logical reasonings this way. Limiting your investigation to a few relevant sources may result in making only similar findings. Diversity creates spice in your presentation!

Exploratory Questions: Types

Having a clear understanding of the information you aim to get helps you stay on track while carrying out your investigation. Here are the types of exploratory questions you need to ask when writing this essay:

Fact questions

Convergent questions, divergent questions, evaluative questions.

A fact question usually has an exact answer. It is the “who…?” “what…? “where…?” and “when….?” question. This question aims to confirm the parties involved in the topic/event/issue, what incited the problem, if there is a case for a geographical problem, and answers if there was a similar case in the past to analyze the possibility of a future recurrence.

Example: Who invented the payphone?

Though similar to a fact question, a convergent question differs in that it calls for a lengthier explanation than those mentioned above. However, like a fact question, it has an exact answer. It often starts with words like: “according to,” “why,” and “how.”

Example: According to most health scientists, what factor is most responsible for the coronavirus’ fast spread?

A divergent question or an idea question aims to incite people’s reasoning to hear their ideas on a topic. It can have many acceptable answers. This question often starts with words like: “what if,” “how would,” “how could.”

Example: How would you brew your coffee fast if your espresso machine broke down?

Also known as the opinion question, this question aims to provoke more detailed opinions to rule out judgment on a topic/issue. It may start with words like: “how well,” “do you think,” and “why should.”

Example: Do you think the lack of social amenities in a state is a result of poorly generated revenue or mismanagement?

Exploratory Essay Outline

Essay outlines help you organize your thoughts and structure your paragraphs better to avoid leaving out any relevant information while writing.

An excellent exploratory essay should follow this pattern:

- Introduction,

- Body paragraphs,

- Conclusion.

The essay Introduction

An introduction serves as a hook to gain the reader’s attention and keep them focused until the writing ends. This introduction must be informative, eye-catching, and promising. It should make the reader feel they would be missing out on vital information if they don’t remain engaged until the end.

This introduction should also outline the scope, describe the issue or topic being addressed, highlight its challenges, and include a brief overview of your approach to tackling the problem. This approach will have your reader yearning to know more.

A disorganized, vague, and error-filled introduction creates an overall wrong impression about your writing. Starting your essay on such a wrong foot can cause your reader’s interest level to drop! Achieving this might appear a bit difficult, and so, below are some hacks to writing a persuasive introduction:

- Start with a fascinating story related to the topic: Telling a story that connects with your readers’ emotions automatically buys their interest.

- Make the issue being tackled appear critical

- Describe the issue discussed as an unresolved puzzle: The problem-solving nature of humans would also leap to solve this riddle; that way, they will stay hooked to your essay.

- Cite a captivating quote

- Pose a thought-provoking question or series of questions: Provocative questions challenge accepted thesis and stir the reader to rethink.

- Use statistics: Quoting reputable sources, describing past events, and how long the issue has lingered also does the trick.

Body Paragraphs

After the introduction, next comes the body of the essay. The body paragraphs extensively explain the highlighted scope, context, background information, and purpose of the topic under investigation. This part of the essay is divided into two segments:

The body: Part one

The body: part two.

The first part of the body aims to answer and clarify the following:

- What the issue or problem is

- The level at which this issue has escalated

- The groups discussing the issue

- The persons that have made the topic to be of utmost importance to them

- Past opinions from many authors

- If the writer shares the same views with any of its readers

- If there is any force repressing the audience from expressing their beliefs

- What the issue has demanded overtime

- The evolution of its pitch of interest and the values it passes across

In complementing the first part of the body, this second part gives room to air people’s diverse views. It aims to explain why they understand the situation from the angle represented in the essay. In tabling their thoughts, you should take note of the following when writing:

- Elaborate their view on the issue

- Why they have such perspective

- State the cases that pitched their opinions to these

- Support their perspectives with facts

- Compare their diverse points of view

Tips on writing impressive body paragraphs:

- Start each paragraph with a topic sentence, usually the main point of interest in a paragraph, and stress this sentence.

- Back this main interest for each paragraph up with facts, quotes, or data. These act as supporting points to give more meaning to the main point and lead to a logical conclusion.

- Try to balance the number of sentences for each paragraph where possible and where not possible, achieve a closer number. Underwritten paragraphs may appear to the reader as having underdeveloped points and vice versa.

Essay conclusion

An exploratory essay conclusion does not imply a repetition of the summary of your findings. In drawing your conclusion in this essay, you should state your contribution to the issue being discussed while maintaining a neutral tone about others’ perspectives.

In the same manner, as you’ve done in the essay body, support your claims with arguments. In most cases, people tend to think that you summarise and support one argument/ opinion while concluding. This is far from the case.

While concluding, discuss your reflections and why you think there is a need for further research on the topic you just explored. Also, give your readers the impression that you are delighted to keep it going. It is excellent to end by calling your readers or audience to action.

When drawing your conclusion, you should avoid:

- Stuffing it with unnecessary information. You should be concise while stating your insights, evaluation, and analysis.

- Statements like, “There may be other better methods” or “Better approaches to this issue state that…” These phrases undermine confidence in your opinion.

- Derailing from the initial subject of discussion. Ensure your conclusion is in line with your introduction’s objectives.

Problems with writing Your Exploratory Essay? Try our Online Essay Writer Service!

Exploratory Essay Examples

Do not be deterred. Writing an exploratory essay isn’t as tedious as it may sound. Below are some great examples of exploratory essays you can study to learn the narration style:

- https://www.thoughtco.com/battle-of-ants-henry-david-thoreau-1690218

- https://www.thoughtco.com/how-it-feels-to-be-colored-me-by-zora-neale-hurston-1688772

30 Exploratory Essay Topics

Another aspect you may need help with is finding topics for exploratory essays. While you may feel tempted to pick any topic for discussing randomly, a good exploratory essay question should tick the following boxes:

- Must be arguable

- Must be unresolved

- There should be resources and facts to back people’s opinion

- Choose a topic that lies within your strength

- Must touch an exciting topic

There is a wide range of categories to choose a topic from. Exploratory essay topics can be crafted on :

- Entertainment

- Music & Arts

- Religion, among others.

The following make for good exploratory essay topics:

- Children with distant parents tend to grow more independent than ones inhabiting with theirs.

- The chain of divorce extends to the children.

- Handling a mobile phone at an early age inhibits a child’s creativity.

- Smartphones result in poor communication through speech.

- How does technology curb job opportunities

- Social media increases the rate of depression and anxiety among teens.

- Shelving pints of milk extend the life span.

- Professional sports affect the player’s psychological well-being.

- Is Opera overrated?

- Actors pose to be the best role models.

- Is e-learning the solution to poor teaching techniques?

- Do graphic sex and scenes influence the high rate of rape?

- What is the possibility you have a doppelganger on earth?

- What don’t women buy into the surrogacy idea when conceiving proves difficult?

- Does diversity in a workplace increase productivity?

- The maximum amount of money that guarantees happiness

- Which is healthier for newborn babies: processed or breast milk?

- Who suffers during divorce the most: the husband, wife, children, or close relatives?

- Who always seeks divorce: men or women?

- Why don’t inter-tribal marriages work out?

- Do marathon races impair the body muscles?

- Does a high concentration of plastics increase the greenhouse effect in our environment?

- Is nuclear energy the future of energy resources?

- How important is it to cite heavy industries away from the residential areas?

- Will technology ease out the work stress or worsen the situation?

- Which makes for easy reading: hard copy or soft copies?

- What is the best online dating app?

- How does the chemistry between same-sex form?

- Who is the blame for the high rate of immigration? Friends? or The government?

- Who shows the most moral decadence: boys or girls?

Writing Tips for an Exploratory Essay

Without knowing the right mountaineering tricks, the summit will look unreachable. The same goes for this writing. Without adhering to these tips, you may never know how to write an exploratory essay.

Now note the following tips to land your five-star essay:

- Try to hear as many diverse perspectives as possible.

- Note your findings

- Reflect deeply on the topic

- Stick to the outline as mentioned earlier when writing

- Include pictorial representations to back up claims, if there are any

- Write from the third-person perspective, except when told not to

- Take time to proofread and also have a second eye go over the essay for you.

Write an Exploratory Essay with Handmadewriting

Are you too busy or feeling a little too under the weather to get your essay done? It may be that you need a professional eye to help you craft the ideal exploratory essay for school work. Handmadewriting is a message away!

With many platforms offering different writing services, finding the right help can seem like an uphill task. However, with Handmadewriting, you have no worries! Here, you have licensed and credible experts to work with. The writers always deliver top-notch writing timely. Besides, you gain 100% ownership of your original copy, and all at the best price range that you can think of. Handmadewriting aims to accommodate everyone, even if they have a tight budget. Place your order by simply signing up on the platform and filling in your requirements and a seasoned writer will do the rest!

A life lesson in Romeo and Juliet taught by death

Due to human nature, we draw conclusions only when life gives us a lesson since the experience of others is not so effective and powerful. Therefore, when analyzing and sorting out common problems we face, we may trace a parallel with well-known book characters or real historical figures. Moreover, we often compare our situations with […]

Ethical Research Paper Topics

Writing a research paper on ethics is not an easy task, especially if you do not possess excellent writing skills and do not like to contemplate controversial questions. But an ethics course is obligatory in all higher education institutions, and students have to look for a way out and be creative. When you find an […]

Art Research Paper Topics

Students obtaining degrees in fine art and art & design programs most commonly need to write a paper on art topics. However, this subject is becoming more popular in educational institutions for expanding students’ horizons. Thus, both groups of receivers of education: those who are into arts and those who only get acquainted with art […]

Verify originality of an essay

Get ideas for your paper

Cite sources with ease

Exploratory essay: basic strategies for compelling papers

Updated 25 Jul 2024

In the realm of academic writing, exploratory essays play a particular role. In contrast to other essay forms, they don’t aim to argue for particular viewpoints or back up claims with proof. Rather, they offer a writer the freedom to delve into a topic and make potential conclusions about it. In this article, we’ll consider this genre's defining characteristics and methods for coherently organizing thoughts and discoveries. You’ll be provided with step-by-step guidelines on how to write an exploratory essay, make it stand out, and improve your writing skills.

What is an exploratory essay?

This is a type of academic text where the author analyzes a topic without necessarily arguing for a specific point of view or providing concrete evidence. Instead, the writer investigates different perspectives, analyzes various angles, and often ends with tentative conclusions or questions for further exploration. To better understand the exploratory essay definition, let’s consider its three main features:

- Consideration of diverse perspectives: Exploratory essays meticulously consider various groups of readers interested in the issue, thoughtfully delving into their points of view.

- Objective approach : This writing maintains an objective stance, presenting the topic impartially rather than proposing solutions. The authors aim to explain diverse positions comprehensively.

- Multiple viewpoints : While some issues may seem binary, exploratory texts go beyond simple either/or perspectives. They analyze various aspects in detail, revealing where opinions are different or similar beyond the surface argument.

How does it differ from other essay types?

If you know how to write an argumentative essay , you may think these two assignment types are comparable. Still, they have some differences. Unlike an argumentative essay, which aims to persuade the reader to adopt a suggested position by presenting supporting arguments, an exploratory text focuses more on inquiry and discovery rather than reaching a definitive conclusion. It allows for open-ended exploration and encourages critical thinking without pressure to take a firm stance.

Where is it used?

You may encounter this genre when the topic is complex or not well-defined, and the writer wants to analyze various viewpoints and potential solutions. It is common in academic settings, especially in disciplines like Sociology, Psychology, and Political Science, where issues are multifaceted and require nuanced analysis.

Exploratory essay format and length requirements

This writing follows a basic structure with an introduction, body paragraphs, and a conclusion. Consider using APA, MLA, or Chicago styles for your document formatting. APA, commonly used in Psychology, Sciences, and Education, requires uniform margins on all sides of the page and includes an abstract. MLA, suitable for the Humanities, features one-inch margins and indented initial words in paragraphs. Chicago style, prevalent in Art and History, calls for double-spaced text and 0.5-inch paragraph indentation.

As for the essay length, these texts can vary widely, ranging from a few pages to longer, more thorough examinations, depending on the complexity of the exploratory essay topics and the depth of analysis required. However, they are typically shorter than argumentative writing since they focus more on exploration and less on making a persuasive argument.

What to include in an exploratory essay: a basic outline

Below is a comprehensive outline and exploratory essay examples to guide your text’s structure and content.

I. Introduction:

- Hook: Start with a statistic, captivating anecdote, or quote to engage the reader.

“Imagine a world where artificial intelligence governs every aspect of our lives, from healthcare to transportation, raising profound questions about the future of humanity.”

- Background information: Provide context and background information on the topic.

“Artificial intelligence (AI) has rapidly advanced in recent years, revolutionizing industries and challenging traditional notions of human intelligence and autonomy.”

- Thesis statement: Pose the main question or issue you’ll study in the science homework .

“This essay seeks to examine the ethical implications of AI in healthcare and its impact on patient care and medical professionals.”

II. Body paragraphs:

- Explanation of the issue: Provide a comprehensive topic overview, including its importance and relevance.

“AI in healthcare offers the potential for improved diagnosis, treatment, and patient outcomes, but it also raises concerns about privacy, bias, and job displacement.”

- Presentation of various perspectives: Explore different viewpoints, arguments, or approaches related to the subject.

“Some experts argue that AI can enhance diagnostic accuracy and efficiency, while others worry about data privacy and the dehumanization of medicine.”

- Analysis of each perspective: Examine each position's strengths, weaknesses, and underlying assumptions.

“While AI algorithms can analyze vast amounts of medical data and identify patterns that human doctors might miss, they may also perpetuate biases present in the data or lack the nuanced understanding of human emotions.”

- Exploration of common ground: Identify any common themes or values shared among the different points of view. Our “ pay for essay ” service can recommend how to do it correctly.

“Both proponents and critics of AI in healthcare agree on the importance of ensuring patient privacy and maintaining the human-centric aspect of medicine.”

- Discussion of unresolved questions: Highlight any unanswered questions or areas for further inquiry.

“Despite ongoing debates, many questions remain unanswered, such as how to regulate AI algorithms to ensure fairness and transparency.”

III. Conclusion:

- Restatement of the central question or thesis: Summarize the main question or issue explored in the text.

“In conclusion, the ethical implications of AI in healthcare remain complex and multifaceted, requiring careful consideration of its benefits and risks.”

- Summary of key points: Recap the key points and perspectives discussed in the body paragraphs.

“Throughout this essay, we’ve examined various perspectives on AI in healthcare, from its potential to revolutionize patient care to concerns about privacy and bias.”

- Reflection on the importance : Consider the broader implications of the exploration and any insights gained.

“This exploration underscores the need for ongoing dialogue and ethical oversight to ensure that AI technologies are deployed responsibly and in the best interest of patients and society.”

- Suggestions for future research: Offer suggestions for further investigation.

“Moving forward, future research should focus on developing ethical guidelines and regulatory frameworks to guide the responsible development and deployment of AI in healthcare.”

This outline offers a structured framework for arranging an exploratory document, enabling thorough analysis of intricate topics. Adapt and extend the outline as necessary to fit your text’s requirements or the complexity of your chosen topic.

How to write an exploratory essay: basic steps

Preparing this writing differs from completing an exemplification essay or any other type of academic paper. Below, we outline five essential steps to guide you through the exploratory research process.

Step 1: Choose your topic.

How to start an exploratory essay? Start by selecting a topic that sparks curiosity. Opt for subjects with diverse perspectives and avoid binary arguments. For example, consider topics like “Gender Inequality in the Workplace: Exploring Root Causes” or “The Rise of Online Learning: Transforming Education in the Digital Age.”

Step 2: Identify viewpoints.

Dive into your topic by identifying at least three distinct positions to analyze. Conduct thorough research and learn the up-to-date literature to uncover various arguments and counterarguments.

Step 3: Understand your audience.

For better essay planning , consider your audience’s perspectives and biases. Explore how their beliefs shape their attitudes toward the topic.

Step 4: Address your research inquiries and concerns.

Respond thoughtfully to the questions raised throughout your study. Consider each inquiry the research body poses and determine the most effective approach to addressing them. Be proactive in identifying and tackling any concerns arising from your work.

Step 5: Create your final project.

Complete the writing process equipped with your newfound insights. Draw inspiration from other essay samples, ensuring coherence and fluidity in your writing.

Following these steps, you can create your research-based papers with confidence and finesse.

How to make your writing stand out: dos and don’ts

If you struggle with how to write exploratory essay, discover some dos and don’ts to create well-thought-out and compelling writing. These rules are also crucial for everyone learning how to write a scholarship essay of any type.

- Choose an intriguing topic: Select one that sparks curiosity and offers room for exploration. Look for subjects with multiple perspectives or unresolved issues.

- Thoroughly investigate the topic: Conduct extensive research to gather relevant information and data. Use credible sources to support your exploration and analysis.

- Organize your thoughts: Structure your document clearly and logically. Use headings and subheadings to guide the reader through your research process. If your text's organization needs to be polished, you can contact the “ buy college essay ” service.

- Be open-minded: Approach the topic with an open mind. Embrace ambiguity and be willing to reconsider your initial assumptions as you study different perspectives.

- Consider several viewpoints : Explore various angles and aspects related to your topic. Show a comprehensive understanding of different opinions and arguments.

- Provide insightful analysis: Offer thoughtful analysis and interpretation of the information gathered. Highlight connections between different viewpoints and draw meaningful conclusions.

Don’ts:

- Avoid delaying your task: Start early to manage your time effectively. Procrastination can hinder your progress. With a limited timeframe to complete each step, begin your work promptly to allow ample time for crafting a well-developed paper.

- Don’t forget to take notes throughout your research process: Record key points and insights as you gather information. Reviewing your notes at the end of your research allows you to reorganize your ideas logically and ensure seamless transitions between them.

- Don’t neglect a structured outline: Designing an exploratory essay outline is a roadmap for your study. It ensures you cover all necessary information in your writing and provides a clear framework for your ideas.

- Avoid oversimplification: Don’t simplistically explain the exploratory essay topics . Avoid reducing complex issues to binary choices or oversimplifying nuanced perspectives.

- Refrain from ignoring counterarguments: Acknowledge and address counterarguments to your viewpoint. Failing to consider opposing opinions can weaken the credibility of your exploratory essay example.

- Steer clear of bias: Guard against bias and partiality in your study. Present information objectively and refrain from imposing your own beliefs onto the analysis.

- Avoid rushing the process: Take your time to analyze the topic and conduct research thoroughly. Rushing through the process can result in shallow analysis and missed opportunities for insight.

- Don’t neglect proofreading and editing: Mistakes are inevitable, so allocate sufficient time to review and refine your paper. Verify its coherence and accuracy, and have confidence in your ability to produce a polished final product.

Final thoughts

Becoming proficient in academic writing opens doors to intellectual exploration and discovery. By being curious, thinking about different viewpoints, and feeling sure even when things are unclear, you can discover new ideas and better understand complicated topics.

However, there is a great solution for those grappling with this challenge. EduBirdie is the premier ally for students in need, offering expert guidance and support to understand the particularities of exploratory writing with ease and proficiency. With EduBirdie by your side, your writing journey becomes manageable and truly enriching.

Was this helpful?

Thanks for your feedback.

Written by Elizabeth Miller

Seasoned academic writer, nurturing students' writing skills. Expert in citation and plagiarism. Contributing to EduBirdie since 2019. Aspiring author and dedicated volunteer. You will never have to worry about plagiarism as I write essays 100% from scratch. Vast experience in English, History, Ethics, and more.

Related Blog Posts

Diversity essay: effective tips for expressing ideas.

In today's interconnected and rapidly evolving world, the importance of diversity in all its forms cannot be overstated. From classrooms to workpla...

Learn how to write a deductive essay that makes you proud!

Learning how to write a deductive essay may sound like a challenging task. Yet, things become much easier when you master the definition and the ob...

A Guide On How to Write a Critical Thinking Essay

This particular term refers to a type of essay written to discuss a specific idea, voice clip, written piece or a video, using purely one’s ideas, ...

Join our 150K of happy users

- Get original papers written according to your instructions

- Save time for what matters most

Purdue Online Writing Lab Purdue OWL® College of Liberal Arts

Introductions, Body Paragraphs, and Conclusions for Exploratory Papers

Welcome to the Purdue OWL

This page is brought to you by the OWL at Purdue University. When printing this page, you must include the entire legal notice.

Copyright ©1995-2018 by The Writing Lab & The OWL at Purdue and Purdue University. All rights reserved. This material may not be published, reproduced, broadcast, rewritten, or redistributed without permission. Use of this site constitutes acceptance of our terms and conditions of fair use.

Many paper assignments call for you to establish a position and defend that position with an effective argument. However, some assignments are not argumentative, but rather, they are exploratory. Exploratory essays ask questions and gather information that may answer these questions. However, the main point of the exploratory or inquiry essay is not to find definite answers. The main point is to conduct inquiry into a topic, gather information, and share that information with readers.

Introductions for Exploratory Essays

The introduction is the broad beginning of the paper that answers three important questions:

- What is this?

- Why am I reading it?

- What do you want me to do?

You should answer these questions in an exploratory essay by doing the following:

- Set the context – provide general information about the main idea, explaining the situation so the reader can make sense of the topic and the questions you will ask

- State why the main idea is important – tell the reader why they should care and keep reading. Your goal is to create a compelling, clear, and educational essay people will want to read and act upon

- State your research question – compose a question or two that clearly communicate what you want to discover and why you are interested in the topic. An overview of the types of sources you explored might follow your research question.

If your inquiry paper is long, you may want to forecast how you explored your topic by outlining the structure of your paper, the sources you considered, and the information you found in these sources. Your forecast could read something like this:

In order to explore my topic and try to answer my research question, I began with news sources. I then conducted research in scholarly sources, such as peer-reviewed journals. Lastly, I conducted an interview with a primary source. All these sources gave me a better understanding of my topic, and even though I was not able to fully answer my research questions, I learned a lot and narrowed my subject for the next paper assignment, the problem-solution report.

For this OWL resource, the example exploratory process investigates a local problem to gather more information so that eventually a solution may be suggested.

Identify a problem facing your University (institution, students, faculty, staff) or the local area and conduct exploratory research to find out as much as you can on the following:

- Causes of the problem and other contributing factors

- People/institutions involved in the situation: decision makers and stakeholders

- Possible solutions to the problem.

You do not have to argue for a solution to the problem at this point. The point of the exploratory essay is to ask an inquiry question and find out as much as you can to try to answer your question. Then write about your inquiry and findings.

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

IMAGES

COMMENTS

Synonyms for EXPLORATORY: experimental, investigative, speculative, tentative, theoretic, preliminary, theoretical, developmental; Antonyms of EXPLORATORY: standard ...

Find 69 different ways to say EXPLORATORY, along with antonyms, related words, and example sentences at Thesaurus.com.

Tips for Crafting an Exploratory Essay. 1. Choose a compelling topic that sparks curiosity and invites exploration. 2. Conduct thorough research to gather diverse perspectives and sources on the topic. 3. Clearly define the problem or question you intend to explore in your essay. 4.

There are three key characteristics of an exploratory essay. Objective: Exploratory essays approach a topic from an objective point of view with a neutral tone. Rather than trying to solve the problem, this essay looks at the topic through a variety of lenses. In an exploratory essay, the author seeks to clearly explain the different viewpoints.

An exploratory essay utilizes the same basic structure that you'll find in other essays. It includes an introduction, body paragraphs, and a conclusion. The introduction sets up the context for your topic, addresses why that topic is worthy of study, and states your primary research question (s). The body paragraphs cover the research that you ...